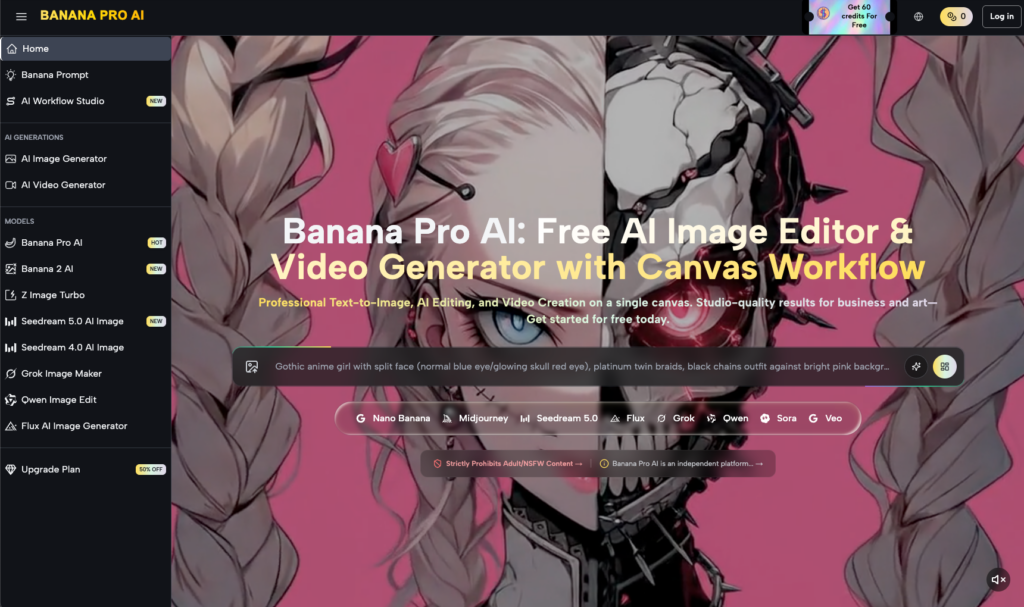

The transition from a static image to a moving sequence is not merely a technical step; it is the most critical pivot point in AI-assisted video production. In many marketing workflows, there is a tendency to view video generation as a corrective process—a way to “fix” or “animate” a mediocre concept. This is a fundamental misunderstanding of how diffusion-based video models actually operate. Whether using a high-throughput engine like Nano Banana Pro or a specialized creative suite, the structural integrity of the first frame dictates the mathematical limits of the resulting video.

If the source image contains ambiguous geometry, muddy lighting, or poorly defined edges, the video model spends its computational energy trying to resolve those inconsistencies rather than generating fluid motion. For performance marketers, this results in high “burn rates” of credits and time. Achieving a high-quality output requires a workflow that prioritizes the “pre-video” stage, ensuring the asset provided to the engine is ready for temporal expansion.

The Deterministic Nature of the Image-to-Video Pipeline

Modern AI video generators do not truly “understand” what they are seeing. They work by predicting the most likely path for pixels to travel based on patterns learned during training. When you feed a source image into Nano Banana, the model treats that image as a set of initial coordinates in a latent space. If those coordinates are clear—meaning the separation between the foreground subject and the background is distinct—the model can easily calculate motion vectors.

When the first frame is cluttered or lacks clear depth cues, the motion estimation often fails. This is where we see the “morphological collapse” common in low-tier AI video: a character’s limb blending into a wall, or a product’s logo sliding off its packaging. These aren’t just video glitches; they are symptoms of poor source asset quality.

It is important to acknowledge a significant limitation here: even with a perfect first frame, current AI models struggle with complex physics, such as liquid pouring or intricate knot-tying. No amount of source-frame optimization can fully compensate for the inherent difficulty the underlying Banana AI has with multi-object physical interactions.

Compositional Choices and Motion Physics

Marketers often choose frames that look “cool” rather than frames that are “functional” for animation. A functional frame for Nano Banana Pro is one where the composition allows for logical movement. For example, if you want a character to walk forward, a “tight” headshot is a poor first frame because the model has no spatial context for the rest of the body or the ground.

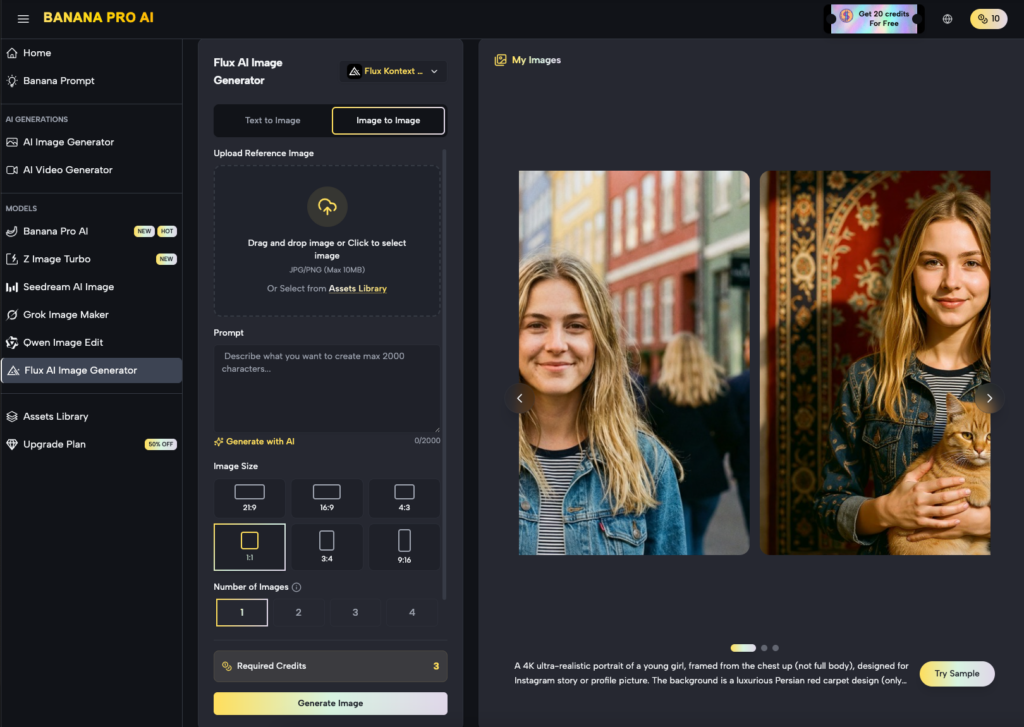

Successful creators use a wider composition, providing the model with enough environmental data to anchor the motion. This is where the AI Image Editor becomes an essential part of the video workflow. Instead of jumping straight to generation, a systems-minded operator will use the editor to extend the background, sharpen the subject’s edges, or remove distracting elements that might snag the motion vectors.

The Role of Lighting Gradients

Lighting is often the silent killer of video consistency. In a static image, a “dramatic” shadow might look intentional. In a video generation context, if the shadow doesn’t have a clear source, the AI may interpret it as a solid object or a hole in the geometry. This leads to flickering—the engine tries to re-render the shadow every few frames, creating a strobe effect that ruins the professional feel of the ad creative.

A clean, balanced lighting setup in the source frame provides a more stable foundation. Using the specialized tools within Banana Pro, creators can normalize the lighting of an image before it enters the video pipeline. This pre-processing reduces the “noise” the video generator has to filter out, leading to a much smoother temporal flow.

Leveraging the Workflow Studio for Asset Refinement

The traditional approach to AI video is often linear: prompt, generate, hope for the best. A more sophisticated, commercial approach treats the process as a modular assembly line. Within the context of the Workflow Studio, the first frame is treated as a master template.

Before committing to a full video render, the image should be scrutinized for “edge bleed.” Edge bleed occurs when the colors of the subject slightly overlap with the background pixels. While barely noticeable in a still image, a video model like Nano Banana will interpret those overlapping pixels as a single mass, causing the subject to “drag” the background with it when it moves.

Using the AI Image Editor to increase the contrast at the subject’s boundaries can solve this. By sharpening the “mask” of the subject, you essentially tell the video engine exactly where the object ends and the environment begins.

Technical Calibration and the Resolution Trap

There is a common misconception that simply using a “High Definition” source image will guarantee a high-quality video. In reality, pixel density is less important than pixel clarity. A 4K image that is slightly out of focus or has heavy film grain will produce a worse video than a 1080p image with sharp, clean lines.

Nano Banana Pro performs best when the source asset has a high signal-to-noise ratio. High-frequency textures—like the fine weave of a fabric or the individual leaves on a distant tree—often create a “crawling” effect in AI video. This is because the model cannot perfectly track every individual tiny detail across 24 or 30 frames per second.

To mitigate this, operators should consider simplifying high-frequency textures in the source frame. Softening the background or reducing unnecessary detail in non-essential areas of the image allows the model to focus its “attention” on the primary motion of the subject.

The Commercial Cost of Poor Preparation

From a performance marketing perspective, the goal is to lower the Cost Per Effective Creative (CPEC). If a team generates 50 videos and only 2 are usable due to warping and flickering, the CPEC is astronomical.

By spending 10 minutes refining the first frame—adjusting the composition, fixing the lighting, and clearing the edges—the “hit rate” of usable videos can jump from 5% to 40% or higher. This shift in focus from “quantity of generations” to “quality of source” is what separates hobbyists from professional creative operations.

We must also be realistic about the current state of the technology: there is still a level of unpredictability in how any AI, including Banana AI, interprets a prompt. Even with a perfect first frame, the “seed” of the generation introduces a stochastic element that can sometimes lead to unexpected results. This is why the ability to iterate quickly on the first frame is more valuable than any individual “lucky” generation.

Managing Subject Complexity in Nano Banana Pro

When working with Nano Banana Pro, the complexity of the subject matter significantly impacts the stability of the output. Human faces and hands remain the most difficult elements for any video generator. If your first frame features a person with six fingers or a distorted eye, the video model will not “fix” these errors; it will animate them, often making them more prominent as the face moves.

This makes the “Image-to-Image” step vital. Before moving to video, any anatomical or structural errors in the first frame must be corrected. A “clean” subject in the static phase is the only way to ensure a recognizable subject in the motion phase.

Background Stability and Anchor Points

A stable video needs anchor points—parts of the frame that do not move. If every pixel in your first frame is intended to be in motion, the model loses its reference for depth. This is why “Cinemagraph” styles are so popular in AI video; they hold the majority of the frame static while animating a single, high-impact element.

When composing your initial asset, think about what should remain still. A solid ground plane or a fixed architectural element provides the model with a “stage.” Without this stage, the subject often appears to be floating or sliding across the screen without weight.

Structural Integrity and the Diffusion Path

The way a diffusion model works is by starting with pure noise and slowly refining it into an image. In an Image-to-Video workflow, the model starts with your source image and adds noise to it, then tries to “denoise” it into a new frame that is slightly different from the first.

If your first frame is structurally weak—meaning the shapes are not well-defined—the denoising process has too much freedom. It will start to deviate from the original shape almost immediately. By the second or third second of video, your subject may no longer look like your brand’s product.

Using Nano Banana effectively involves creating a first frame that is “unambiguous.” High contrast, clear silhouettes, and logical spatial relationships keep the diffusion path on track. This is especially true for brand-specific assets where the shape of a bottle or a logo must remain consistent.

Practical Checklist for First-Frame Optimization

To ensure the highest possible quality for downstream video, creators should follow a rigorous pre-flight checklist for their source assets:

- Check for Anatomical Accuracy: Use the AI Image Editor to fix hands, eyes, and limbs.

- Define the Depth: Ensure there is a clear foreground, midground, and background.

- Simplify Textures: Reduce noise in areas where motion is not required.

- Balance the Lighting: Avoid “floating” shadows or ambiguous light sources that could lead to flickering.

- Test the Composition: Ensure the subject has enough “room to move” within the frame without hitting the edges.

This level of discipline may seem tedious in a world of “one-click” solutions, but for teams building repeatable asset pipelines, it is the only way to achieve professional results at scale. The power of Banana Pro lies not just in its ability to generate motion, but in its suite of tools that allow for this level of granular control over the starting point.

Summary of the Workflow Shift

The future of AI video isn’t about better prompts; it’s about better assets. As models like Nano Banana Pro continue to evolve, they will become more sensitive to the data they are fed. The “first frame” is no longer just a starting point; it is the source of truth for the entire temporal sequence.

By treating image generation and image editing as the most important parts of the video creation process, marketers can reduce waste, improve brand consistency, and produce content that stands up to the scrutiny of a high-conversion ad campaign. The goal is to move away from the “lottery” mentality of AI generation and toward a disciplined, systems-minded approach where every pixel in the first frame has a purpose in the motion that follows.