Software quality teams face a familiar problem: shipping faster while keeping defect rates low. Manual test authoring slows both goals down. Automatic test case generation lets intelligent tools draft, organise, and maintain test cases on your team’s behalf, freeing engineers to focus on work that requires genuine judgment.

This article covers the five most significant advantages of automated test case generation, the common challenges QA teams face along the way, and how to implement automation effectively.

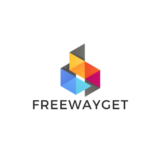

Key Benefits of Automated Test Case Generation

Most QA teams underestimate how much time goes into writing tests versus actually running them. Once authoring is automated, your engineers stop functioning as documentation writers and start working as quality strategists. That change compounds across every stage of the delivery cycle, and its effects are felt well beyond the QA team itself.

An AI Test Case Generator changes what your team spends attention on, and that is where the real value lies.

1. Faster Test Creation and Reduced Manual Effort

Writing test cases manually is a multi-step process. Your tester must analyse requirements, identify scenarios, draft steps, and review everything for gaps before a single test runs. For one moderately complex feature, this easily consumes half a working day, and that time adds up fast across a full sprint.

Automated test case creation compresses that cycle significantly. Feed a tool a user story or specification document and it returns a structured, reviewable test suite almost immediately. That speed gain shows up across the entire sprint, not just in individual tasks.

With time freed from authoring, your engineers can redirect focus toward work that genuinely matters:

- Exploratory testing that uncovers unexpected failure modes

- Root cause analysis on recurring defects

- Risk-based prioritisation across the release scope

- Reviewing and refining generated output for accuracy

For teams practising continuous delivery, this is particularly valuable. A pipeline should never stall waiting on test documentation, and with automation in place, it does not.

2. Broader and More Consistent Test Coverage

Coverage gaps are among the most common causes of production defects. Under deadline pressure, testers gravitate toward familiar scenarios and happy paths, which means boundary conditions, unusual input combinations, and less obvious failure modes get skipped. Not because of carelessness, but simply because time runs out.

Automated test case generation applies systematic logic to every input. Coverage no longer depends on who wrote the tests or when, because the tool works through equivalence classes and boundary values consistently every time.

That consistency compounds further over time. Consider what uneven manual coverage typically produces:

- Overlapping tests in well-understood areas

- Blind spots in complex or recently changed modules

- Suites that reflect individual testers’ habits more than actual risk

- Maintenance debt that grows with every release

By generating tests from the same rules every sprint, your suite stays uniform and traceable. New team members inherit a coherent coverage model, not a patchwork built up over years by a dozen people with different approaches.

3. Improved Accuracy Through AI Test Case Generation

Rule-based automation follows fixed patterns, which works well for predictable scenarios. AI test case generation goes further by parsing natural language requirements, inferring implicit constraints from context, and drawing on historical defect data to prioritise scenarios most likely to expose real bugs.

The practical effect is a test suite aligned with actual user behaviour, not just documented specifications. Teams using AI test case creation tools typically see:

- Fewer false positives cluttering test results

- Better signal-to-noise ratios across the suite

- Critical defects caught before they reach production

- Test priorities that reflect real usage patterns, not just written requirements

On top of that, the AI accumulates knowledge from past failures and applies it going forward. A manually maintained test library rarely achieves this, because institutional knowledge tends to live in people’s heads rather than in the tests themselves.

4. Lower Testing Costs Over Time

There is an upfront cost to adopting new QA tooling, whether in licensing, configuration, or onboarding time. That cost is real, and your team will feel it in the first few months. Over time, however, the economics move clearly in favour of automation.

Once infrastructure is in place, generating tests for a new feature costs a fraction of what manual authoring would. Regression cycles that previously took weeks of QA effort can run overnight, and senior engineers spend less time on routine documentation and more on decisions that affect product quality directly.

The savings are most pronounced in organisations that release frequently or maintain several product lines. As coverage grows without a proportional increase in headcount, the long-term financial case for automated test case creation becomes hard to argue against.

5. Faster Software Delivery Without Sacrificing Quality

Speed and quality pull against each other in most manual testing workflows, and that tension is one of the more frustrating parts of running a QA programme at pace. Automation reduces it considerably. When test cases are generated automatically and connected to a CI/CD pipeline, every code commit is validated against an up-to-date suite with no manual steps required.

The delivery benefits are concrete:

- Defects surface earlier in the cycle, when they cost least to fix

- Release gates stop being held up by documentation delays

- QA becomes a continuous activity woven into development, not a phase at the end

- Your team gains confidence at the point of deployment backed by evidence, not assumption

Taken together, these gains mean organisations that combine automated test case creation with continuous integration consistently achieve shorter release cycles, and they do so without the quality compromises that manual shortcuts typically introduce.

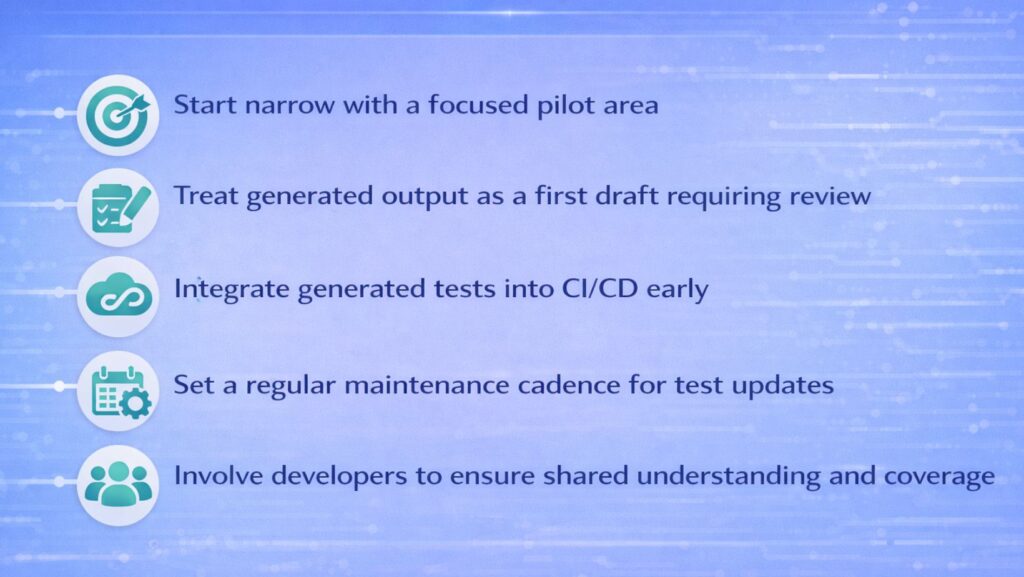

Best Practices for Implementation

Getting value from automated test case generation depends on how your team introduces it. A few principles make a consistent difference in practice.

- Start narrow. Pick one feature area, generate tests for it, review the output carefully, and refine your approach before expanding further. A focused pilot surfaces tool limitations and workflow gaps early, when they are still easy to address.

- Treat generated output as a first draft. Automated test case creation requires a human review step. A trained QA engineer should go through generated tests for gaps, misaligned assumptions, and redundancy before they enter the suite.

- Integrate early. Connect generated tests to version control and CI from the beginning. Tests that sit outside the main workflow fall out of sync with the codebase quickly, and building that connection early is considerably easier than fixing it later.

- Set a maintenance cadence. Requirements change, and your tests need to reflect those changes or they start producing false confidence. A regular review cycle, tied to sprint retrospectives or release planning, keeps the suite accurate over time.

- Involve developers. QA automation produces better results when engineers understand what is being tested and why. Shared ownership leads to faster feedback loops and fewer gaps between what the code does and what the tests verify.

Conclusion

Automated test case generation addresses the most persistent friction points in QA: slow authoring cycles, uneven coverage, test suites that age poorly, and delivery timelines held up by manual processes. AI test case creation makes it possible to maintain broad, accurate coverage without the time investment that manual approaches demand.

The teams that get the most from these tools start with a clear scope, integrate thoughtfully, and build a culture where generated tests serve as a foundation that human expertise builds on top of. That combination produces a QA programme capable of keeping pace with modern delivery expectations, without trading away the quality that makes fast releases worth shipping.